AI is moving beyond copilots into autonomous agents that can access enterprise data, trigger workflows, and take real actions across systems.

While this shift unlocks significant productivity gains, it also introduces new risks around data exposure, oversharing, and uncontrolled AI access to sensitive information.

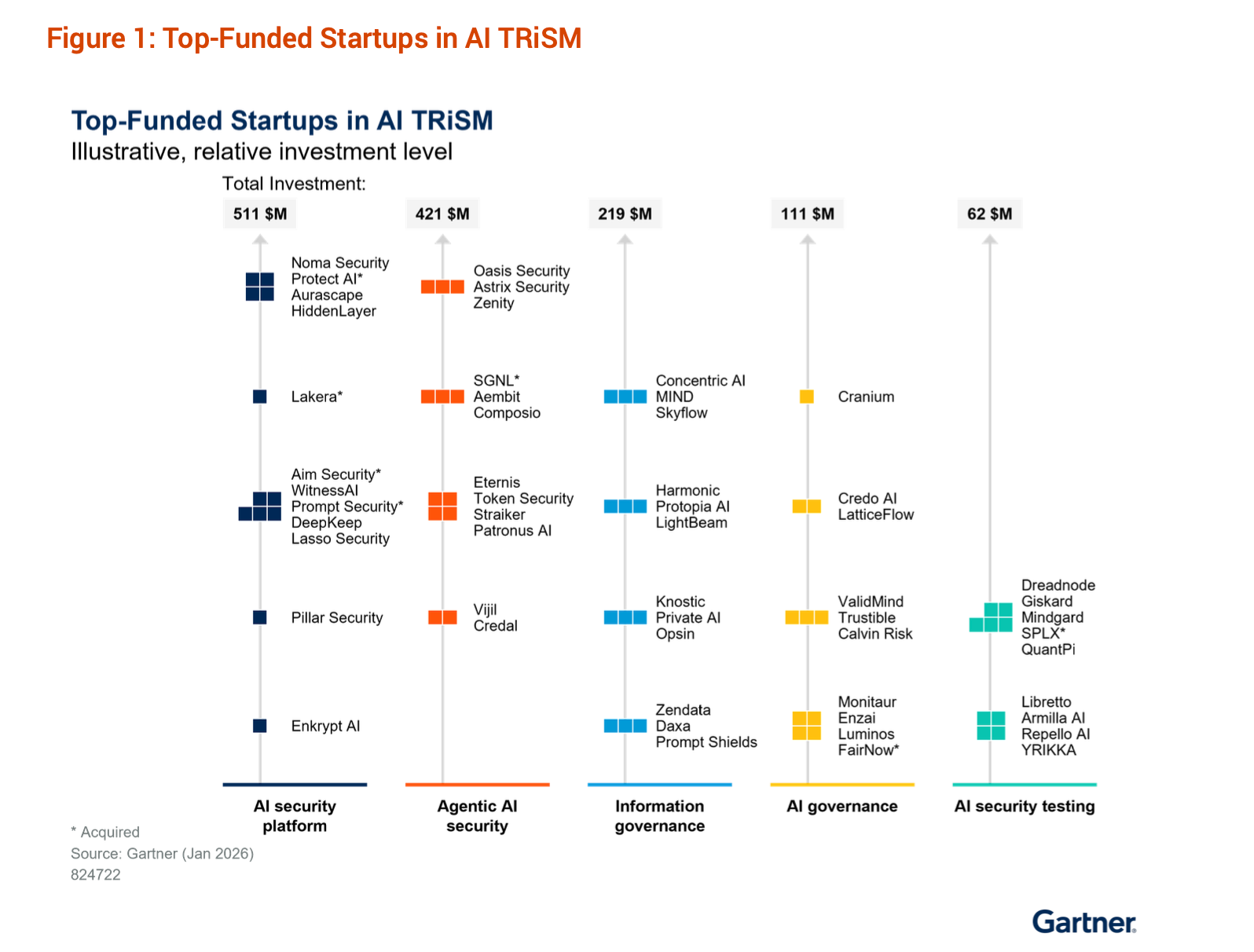

A recent Gartner analysis of AI TRiSM startups highlights how security vendors and startups are addressing these challenges across the AI ecosystem. In the report, Daxa is recognized among startups building solutions in the Information Governance category, an increasingly critical layer for secure AI adoption.

As enterprises accelerate AI deployment, information governance is emerging as the foundation for responsible AI operations.

Gartner’s AI TRiSM Framework: The Five Pillars of AI Security

Gartner’s AI TRiSM landscape highlighting startups across AI security platform, agentic AI security, information governance, AI governance, and AI security testing. Daxa appears in the Information Governance segment.

According to the Gartner research on AI Trust, Risk and Security Management (AI TRiSM), organizations must address AI risks across five core categories:

- AI Security Platforms

- Agentic AI Security

- Information Governance

- AI Governance

- AI Security Testing

These five areas collectively define the security and governance stack required for safe AI deployment in enterprises.

In the report, Gartner analyzed 120 early-stage startups that collectively raised over $1.7 billion in funding, highlighting how rapidly the AI security ecosystem is evolving.

Among these categories, Information Governance has become one of the most critical layers, particularly as organizations deploy AI agents and data-driven AI applications.

Gartner Highlights Information Governance as a Core AI TRiSM Category

Information governance for AI focuses on managing how data is discovered, accessed, and protected across the entire AI lifecycle.

According to the Gartner analysis, this governance layer covers:

- Data discovery and classification

- Privacy protection and data minimization

- Access control and policy enforcement

- Runtime monitoring of AI data usage

The goal is to prevent risks such as:

- Sensitive data exposure through AI prompts

- Oversharing across internal systems

- Data leakage to external AI services

- AI agents accessing unauthorized information

In short, information governance ensures that AI systems interact with enterprise data safely and within policy boundaries.

Why Information Governance Is Critical for Agentic AI

The rise of agentic AI systems introduces a new class of data governance challenges.

Unlike traditional applications, AI agents can:

- Query multiple enterprise data sources

- Combine information across systems

- Interact autonomously with APIs and tools

- Generate outputs dynamically based on context

Autonomous agents don’t just increase data exposure risk, they introduce action-level risk across enterprise systems.

Unlike traditional AI use cases that primarily involve reading or generating data, agentic systems can:

- Modify records in CRM and databases

- Trigger workflows across internal tools

- Execute transactions or API calls

- Delete or overwrite critical information

This fundamentally changes the risk model.

Earlier AI risks were about data exposure (read).

With agentic AI, the bigger risk is system impact (write, modify, delete).

The primary concern is no longer just “What data can the AI see?”

It becomes “What actions can the AI take with that data?”

For example:

An AI agent handling customer support may not only access user data but also update account details, issue refunds, or modify service configurations. Without proper governance, this creates risks such as:

- Unauthorized or incorrect data modifications

- Accidental deletion of critical records

- Irreversible system changes triggered by faulty reasoning

- Privilege escalation through chained actions

This makes agentic AI closer to an autonomous operator than a passive assistant.

As a result, information governance must evolve beyond data protection to include:

- Action-level access control (what agents can do, not just what they can see)

- Context-aware permissions based on role, intent, and data sensitivity

- Real-time monitoring of both data access and system actions

- Guardrails to prevent destructive or irreversible operations

Daxa Recognized by Gartner in the Information Governance Category

In the Gartner AI TRiSM startup landscape, Daxa is highlighted among companies addressing information governance for AI systems.

This category focuses on controlling how AI systems access, use, and act on enterprise data.

Daxa’s approach centers on enabling organizations to:

- Gain visibility into AI data access and usage

- Enforce governance policies for AI agents and applications

- Monitor AI interactions with enterprise data in real time

- Prevent sensitive data exposure during AI operations

This capability becomes increasingly important as AI moves beyond experimental use cases into core enterprise workflows.

Instead of relying only on model guardrails, enterprises need continuous data governance across the AI lifecycle.

Daxa extends beyond traditional data governance by enforcing both data access and action-level controls, ensuring AI agents operate safely across read and write operations in enterprise systems.

How AI Information Governance Platforms Protect Enterprise Data

Modern information governance platforms combine several layers of protection.

1. Data Discovery and Classification

Before organizations can secure data used by AI, they must first identify and classify it.

AI governance platforms help enterprises automatically discover:

- Sensitive data

- Intellectual property

- Personally identifiable information (PII)

- Compliance-regulated datasets

This step provides visibility into what data exists and where it is stored.

2. Data Privacy and Protection Controls

Once data is identified, governance platforms apply safeguards such as:

- Data masking

- Redaction

- De-identification

- Privacy-enhancing technologies

These controls allow AI systems to leverage data while minimizing the risk of exposure.

3. Policy-Based Access Controls for AI Systems

AI governance platforms enforce policies defining:

- Which users or AI agents can access specific data

- What data can be shared with AI systems

- How data may appear in AI outputs

This ensures that AI systems follow least-privilege access principles.

4. Runtime Monitoring of AI Data Usage

Governance must extend beyond model training into runtime monitoring.

Modern platforms continuously inspect AI interactions to detect:

- Sensitive data leakage

- Unauthorized access patterns

- Policy violations

- Oversharing across systems

This runtime visibility helps organizations maintain control even as AI systems evolve.

The Future of AI Security Is Data-Centric Governance

The Gartner report highlights a fundamental shift in AI security strategy.

Security is moving away from focusing only on model safety and AI application protection toward data-centric governance.

This shift reflects a simple reality:

Even the most secure AI model can become a risk if it has unrestricted access to sensitive enterprise data.

Information governance platforms ensure that AI systems operate within clearly defined data policies, protecting organizations from compliance risks, privacy violations, and unintended data exposure.

Conclusion: AI Governance Starts With Information Governance

As enterprises scale AI adoption, data governance will become the backbone of AI security.

The recognition of Daxa within the Gartner AI TRiSM ecosystem highlights the growing importance of solutions that secure how AI systems access and interact with enterprise data.

Organizations that successfully deploy AI at scale will be those that embed information governance into every stage of the AI lifecycle, from data discovery and privacy protection to runtime monitoring and policy enforcement.

Because in the era of agentic AI, controlling data access and actions is what ultimately defines whether AI is safe to deploy at scale.